Genealogy demands accuracy above all else. One wrong link can unravel generations of work. I wanted to see what AI does for us researchers. What I found was that it can create more problems than it solves.

A look at what happened when I researched a Family

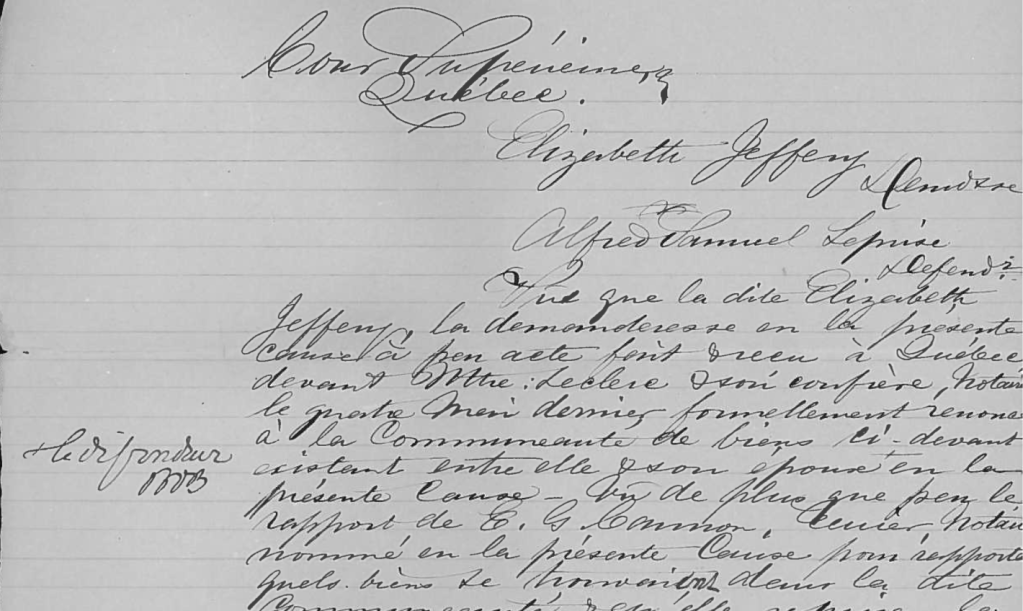

I decided to experiment its abilities on the Laprise family in my tree. Records I have included parish registers, censuses, vital & notary records. Many of these records are in French and since I cannot read French, a major part of the process involved uploading documents (scanned records, text excerpts from parish entries, notary documents and census) for the AI to:

- Provide word-for-word transcriptions of the original French text,

- Translate those transcriptions into English,

- And create summaries or extractions of key genealogical details like names, dates, locations, and relationships.

This helped me figure out if the transcription and interpretation seemed accurate by allowing direct comparison. The goal was straightforward: confirm everything step by step through careful review and verification.

Where AI Became a Foe

This is the part that hit hardest and why I had to discard so many AI suggestions:

- Confident but completely wrong connections — AI would link people who weren’t related. It would merge unrelated Laprise branches. It also created phantom parents or spouses based on superficial similarities.

- Invented or wrong details — It fabricated marriages, death dates, or other events that didn’t match any real records. Trying to link up completely unrelated families and claimed they were connected.

- Fully made-up record sets or sources — AI suggested entire collections, archives, or specific records. It provided convincing details like volumes, pages, or years. These records simply don’t exist. A person could waste time searching for them in vain.

- No real sourcing — It didn’t reveal that suggestions came from unreliable user-submitted trees. Errors compounded quickly. They spread like wildfire.

I explicitly asked the AI before starting not to make up information. It was instructed to not imagine or hallucinate data. However, it did so anyway. When certain details or records were pointed out as not real, the AI defended them as accurate. It did this instead of correcting or admitting the error. This made the problems even more frustrating, it would double down to defend it’s lie.

These weren’t small mistakes. Hours of time could be wasted on it’s false leads.

Any “Friend” Moments?

To be fair, AI isn’t entirely useless in genealogy. In this case, it was genuinely helpful for very specific tasks. It provided word-for-word transcriptions and English translations of French documents as a starting point. This was essential since I don’t read the language. But even those limited upsides were overshadowed by the risks when moving beyond basic reading/translation. It wasn’t reliable enough to trust.

Hard Lessons from Laprise Research

After these repeated issues, here’s what I will be adopting moving forward:

- AI is for limited, safe tasks only — Use it for brainstorming ideas. It can also be used for rough word-for-word transcriptions, translations, or summaries of foreign-language documents. Never use it for facts, connections, final conclusions, or assuming a record/collection exists.

- Verify everything against primaries — Stick to original images in collections like the Drouin records, FamilySearch, or Ancestry scans. Cross-check transcriptions, translations, and any suggested sources manually.

- Discard without hesitation — If something smells off, reject it. Possible issues include being too tidy, having no source, a chronology that doesn’t add up, or a record set that can’t be found. Rebuild from verified info.

- Skepticism wins — Never assume an AI will stop hallucinating just because you’ve instructed it not to. Double check all it tells you.

Bottom Line

As much as I would like a research buddy, AI is not it, procede with caution. It did help with word-for-word transcriptions, translations, and summaries of French records, and this assistance was huge for me. However, when I tried to take it further as far as research goes, it introduced convincing lies. These lies included fully made-up record sets, wrong connections, and fabricated details. They derailed progress and required discarding bad data. The real progress came from careful, manual verification—not from letting AI fill in the blanks.

Treat AI as a curious but unreliable assistant for language barriers. Use it for transcription assistance. You can also use it for translation help or to create images, like the featured image for this post.

*Always question its output. Be cautious when it mentions specific records or collections.

I will continue to utilize AI but with a heavy dose of caution and skepticism.

Rate 3 out of ten.

AI used Gemini

Leave a comment